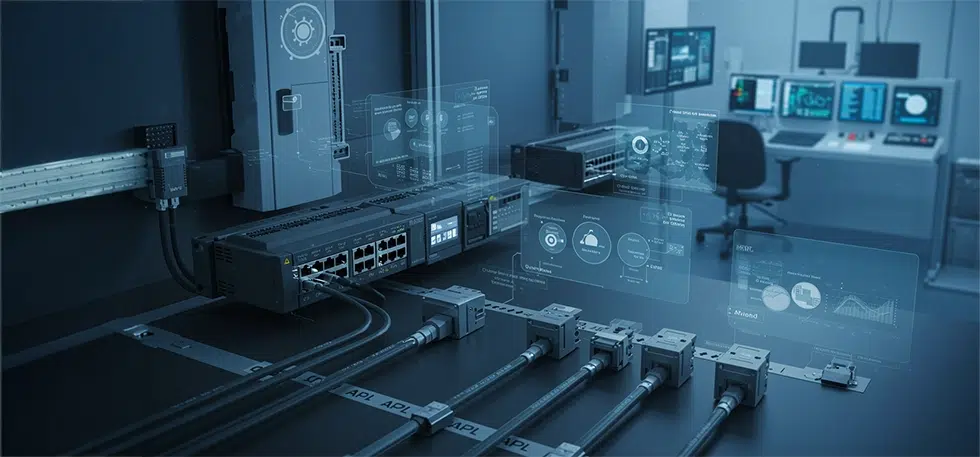

The Protocols Powering Next-Gen Industrial Network Revolution – Time-Sensitive Networking (TSN) and Advanced Physical Layer (APL)

Why Legacy Industrial Networks Are No Longer Enough?

For example, a plant running PROFIBUS for instrumentation, Modbus TCP for PLC communication, and a proprietary protocol for drives often struggles to synchronize device data. This results in delayed diagnostics, inconsistent system behavior, and increased integration overhead.

These challenges are amplified by the exponential increase in high-density sensors, tighter real-time control loops, and edge analytics further stresses these architectures, exposing latency, bandwidth, and scalability limitations. With IT and OT domains rapidly converging, enterprises require unified, deterministic, and secure communication frameworks that legacy systems simply cannot deliver.

TSN: Enabling Deterministic Ethernet for Industry 4.0

To achieve these benefits, TSN relies on several core capabilities that decision makers must understand. Time synchronization (802.1AS) ensures precise coordination across devices, while traffic shaping and scheduling (802.1Qbv, Qbu) and resource reservation (802.1Qcc) guarantee predictable performance for critical applications. Seamless redundancy protects continuous operations, and IT/OT unification provides a scalable, cohesive infrastructure bridging traditional operational silos.

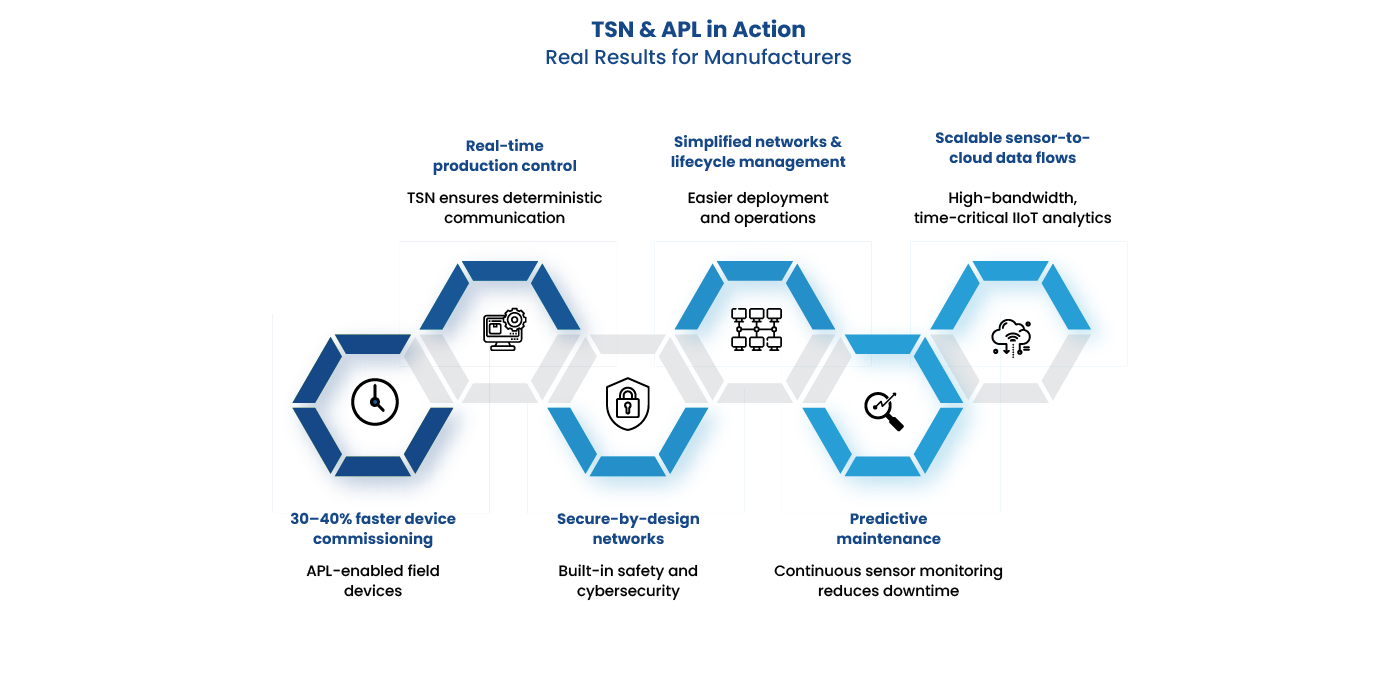

Understanding these capabilities is essential, as they directly translate into measurable business value. TSN reduces total cost of ownership by simplifying network complexity, enables real-time decisioning and closed-loop automation, and supports scalable architectures that accelerate digital transformation. Additionally, TSN fosters vendor interoperability and ecosystem readiness, allowing enterprises to deploy best-in-class devices without being locked into proprietary systems.

We understand our customers need to adopt these new technology and require support from those who understand its impact. At Utthunga, with deep domain expertise combined with our capability Silicon to System capability we have been helping our customer in TSN adoption, implementation of TSN, network simulations, conformance testing. Beyond deployment, Utthunga helps migration from legacy protocols like PROFINET, EtherCAT, Ethernet/IP, and OPC UA to TSN, ensuring a seamless transition and resilient, future-ready industrial network architecture.

APL: Advancing Field Device Connectivity in Industrial Networks

To realize its full potential, plant operators and OEMs benefit from several strategic advantages. APL enables seamless integration with Ethernet and cloud ecosystems, facilitating end-to-end data flow from sensors to enterprise systems. Its ability to deliver enhanced diagnostics supports predictive maintenance, while simplified wiring reduces complexity and installation costs. Furthermore, APL’s vendor-neutral interoperability allows organizations to adopt best-in-class devices without being constrained by proprietary systems.

These capabilities unlock transformative use cases in next-generation smart plants. APL supports intelligent field devices, including smart valves, transmitters, and actuators, and accelerates brownfield modernization in chemical, oil & gas, and process industries. High-density sensor networks powered by APL enable real-time monitoring and control, enhancing operational efficiency, safety, and asset utilization.

Utthunga plays a critical role in APL adoption, providing expertise in developing APL-compliant device firmware and software, testing and validation with leading protocol stacks, and enabling migration of legacy devices—HART, FF, and PROFIBUS—to APL-ready architectures. With Utthunga’s capability our customer are modernizing their field-level connectivity, unlock actionable insights, and build resilient, future-ready industrial networks.

TSN + APL: The Converged Future of Industrial Ethernet

Together, TSN and APL eliminate legacy network fragmentation, remove protocol gateways, and simplify system engineering. The result is a single, seamless communication pathway that delivers consistent performance, full data transparency, and effortless integration across IT and OT domains—all the way down to the sensor and actuator level.

Ready to move toward a fully converged TSN + APL architecture? Connect with us to explore solutions tailored for next-generation industrial networks.